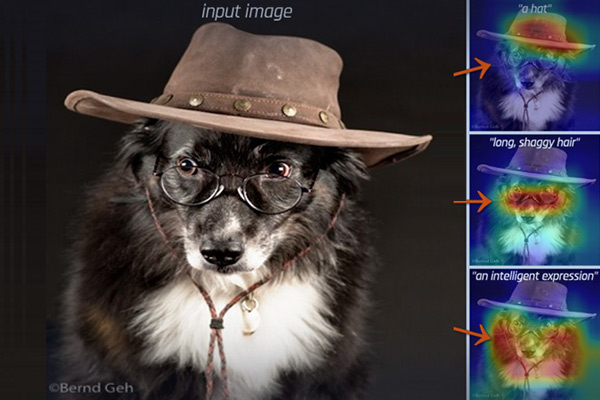

Modern deep neural networks have now reached human-level performance across a variety of tasks. However, unlike humans they lack the ability to explain their decisions by showing where and telling what concepts guided them.

In this work, we present a unified framework for transforming any vision neural network into a spatially and conceptually interpretable model.

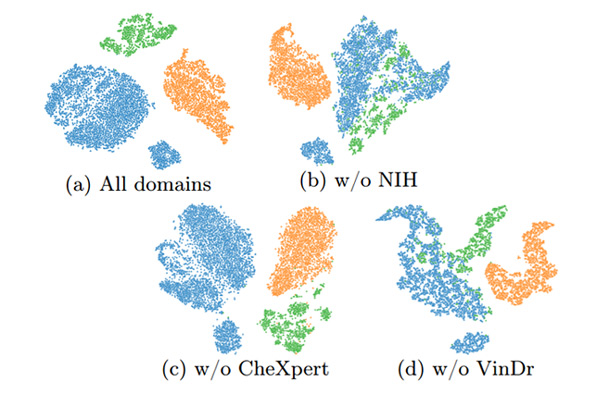

Medical imaging datasets often vary due to differences in acquisition protocols, patient demographics, and imaging devices. These variations in data distribution, known as domain shift, present a significant challenge in adapting imaging analysis models for practical healthcare applications.

Medical imaging datasets often vary due to differences in acquisition protocols, patient demographics, and imaging devices. These variations in data distribution, known as domain shift, present a significant challenge in adapting imaging analysis models for practical healthcare applications.

In this work, we introduce HyDA, a novel hypernetwork framework that leverages domain-specific characteristics rather than suppressing them, enabling dynamic adaptation at inference time.